AI in mental health support offers scalable assessment and monitoring that complements traditional care. It can triage risk, track symptoms, and provide between-session prompts while preserving patient autonomy. Data governance, privacy protections, and transparent consent are essential, as is clinician oversight and clear safety protocols. When implemented responsibly, AI informs decisions, supports collaboration, and may improve outcomes. The balance between innovation and trust remains critical, inviting careful consideration of ethics, efficacy, and accountability as care evolves.

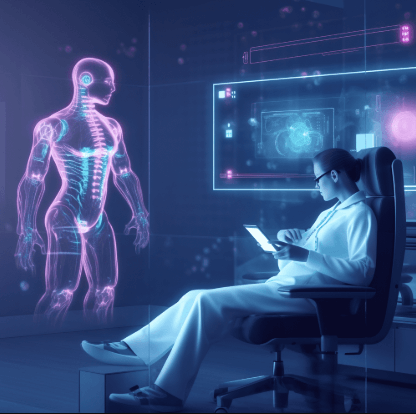

What AI in Mental Health Can Do for You

AI in mental health can assist across assessment, monitoring, and support, offering scalable tools that complement traditional care. The discussion highlights AI driven insights that inform clinician decisions while preserving patient autonomy. Data governance frameworks ensure privacy, consent, and transparent use of data.

This approach supports evidence-based interventions, respects individual preferences, and fosters collaborative, data-informed care without diminishing human-centered judgment.

How AI Triages, Monitors, and Supports Between Sessions

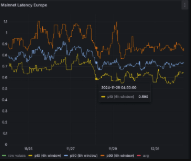

Between-session activities are supported by AI-assisted tools that extend clinical care beyond scheduled appointments, enabling ongoing assessment, timely monitoring, and proactive support. AI triages risk, flags deterioration, and prioritizes urgent contact while preserving patient autonomy. It interprets trends to inform care plans, supports therapeutic check-ins, and prompts coping strategies. Data privacy and user consent govern data handling, safety, and accessible, trustworthy monitoring.

Key Ethics, Safety, and Privacy Considerations

Key ethics, safety, and privacy considerations center on ensuring that AI-enabled mental health tools protect patients while enabling effective care. The discussion emphasizes transparent data practices, accountable governance, and robust risk assessment. Clinicians note that privacy risks require proportionate safeguards, while consent practices must be clear and ongoing. Ethical deployment balances autonomy with vulnerability, prioritizing patient dignity, trust, and clinical outcomes.

How to Evaluate and Adopt AI Tools Responsibly

Evaluating and adopting AI tools in mental health requires a structured, evidence-based approach that prioritizes patient safety, effectiveness, and accountability. Practitioners assess privacy safeguards, data governance, and ethics risk, ensuring transparent criteria and ongoing oversight. The process supports user autonomy, emphasizes clinician collaboration, and promotes iterative validation, safety monitoring, and clear consent. Responsible adoption aligns with clinical integrity, patient trust, and measurable outcomes.

Frequently Asked Questions

What Training Data Shapes an Ai’s Mental Health Responses?

Training data shaping an AI’s mental health responses comprises diverse, ethically sourced text and anonymized transcripts; emphasis lies on data privacy and bias mitigation to prevent harm, improve reliability, and support informed, autonomous user decisions with clinical empathy.

Can AI Replace Human Therapists Entirely?

AI cannot fully replace human therapists. Evidence-based practice indicates limits from AI ethics and therapist boundaries; collaboration enhances care, preserving autonomy, empathy, and nuanced judgment essential for freedom in mental health treatment.

How Quickly Do AI Tools Learn From User Input?

Symbolically, the system evolves through rapid adaptation, yet learning pace varies with data quality and safeguards; it requires ongoing evaluation. It emphasizes data privacy, evidence-based limits, and empathetic transparency for users seeking freedom.

See also: AI in Healthcare Transformation

Are There Risks of Misdiagnosis With AI Support?

Yes, there are misdiagnosis risks with AI support, though advances aim to improve diagnostic accuracy. The evidence-based view notes potential biases and data gaps; clinicians should monitor outcomes, ensuring transparency, safety, and patient-centered decision making to uphold diagnostic accuracy.

How Do AI Tools Handle Crisis or Self-Harm Ideation?

Answer: AI crisis response relies on real-time risk flags, escalation protocols, and human collaboration; self-harm safeguards guide alerts, safe handling, and referrals. Clinically grounded, empathetic, and evidence-based, it respects autonomy while prioritizing safety and supportive care.

Conclusion

AI in mental health offers scalable assessment, monitoring, and proactive support that complements clinical care. When deployed with robust governance, privacy protections, and transparent consent, these tools can enhance safety, personalize interventions, and support clinician decision-making. Between-session AI can aid early risk detection and continuity of care while preserving patient autonomy. However, responsible use requires clear safety protocols, ongoing evaluation, and accountability. In this evolving landscape, AI is a useful partner—yet it remains a co-pilot, never the destination.